If you have ever typed something into ChatGPT, read the response, and thought "well, that was useless" — welcome to the club. Almost everyone goes through a phase where AI feels overhyped and underwhelming. The outputs are vague. The tone is wrong. You spend more time fixing the result than you would have spent doing the task yourself. And quietly, you start wondering whether AI is genuinely useful or just very good marketing.

Here is the reassuring truth: the gap between disappointing AI results and genuinely impressive ones is usually not about intelligence, technical skill, or having the right subscription. It is about a handful of habits — small shifts in how you interact with these tools — that nobody teaches you explicitly. We made every single one of these mistakes ourselves, some of them for embarrassingly long stretches, before figuring out what was going wrong.

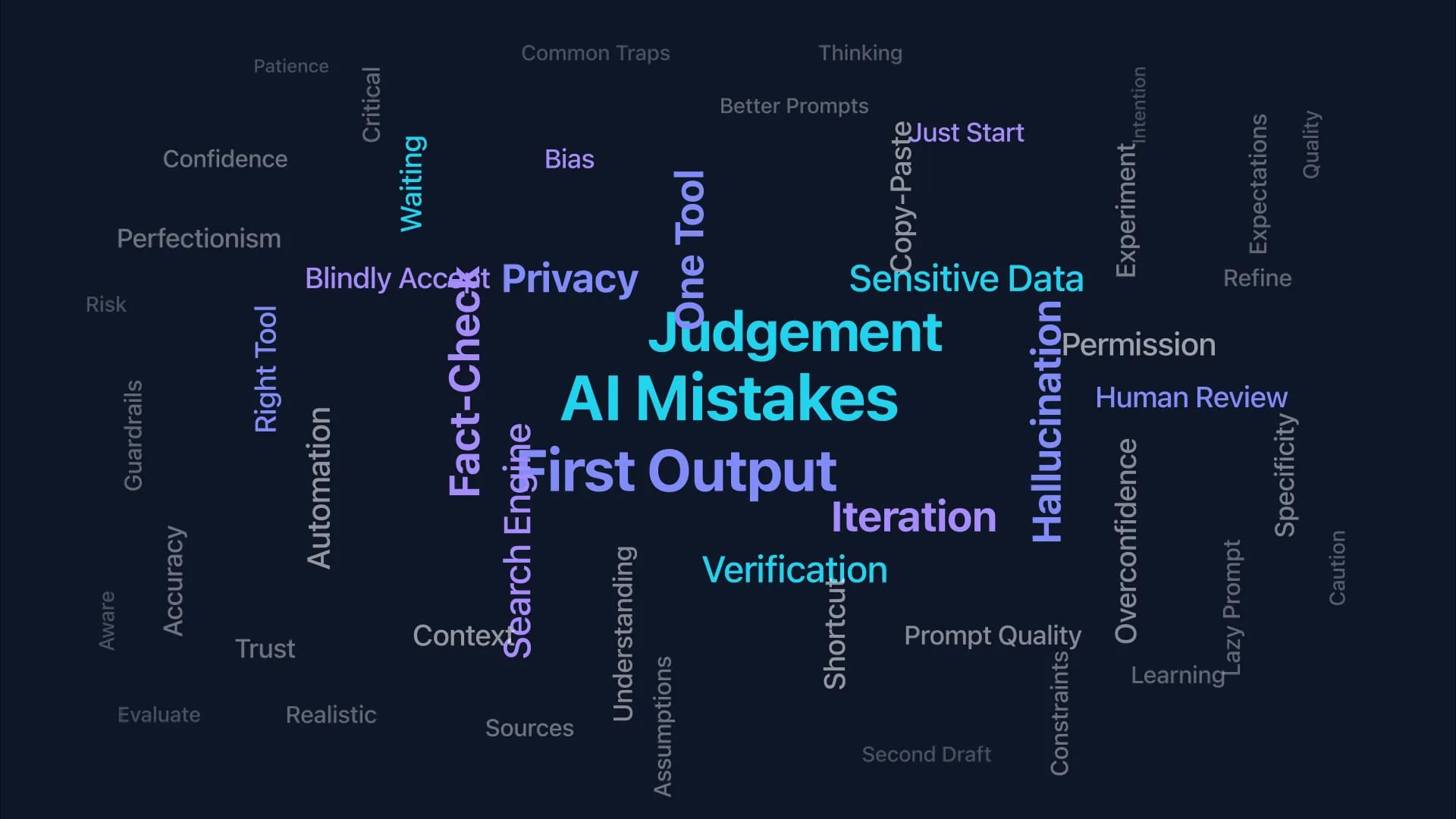

These are the seven most common mistakes we see, along with what to do instead. If even two or three of these resonate, fixing them will change your experience dramatically.

Mistake 1 — Treating AI Like a Search Engine

This is the most common starting point, and it is completely understandable. We have spent two decades training our brains to type short questions into a box and expect answers. "What is the capital of France?" "How do I fix a leaky tap?" Google rewarded brevity. AI does not.

When you ask an AI assistant a short question — "Write me an email" or "Give me some marketing ideas" — you get a short, generic answer. The tool is not being lazy. It simply does not have enough information to give you anything better. It is like walking up to a colleague and saying "do a thing" without explaining what thing, for whom, or why.

The fix is to treat AI like you are briefing a capable colleague rather than searching a database. Instead of "Write me an email," try: "I am a project manager. Write a friendly but professional email to my team of 8, letting them know our launch date has moved from March 15 to April 2. Emphasise that the quality work they have done so far is not wasted — we are using the extra time to improve testing. Keep it under 150 words."

The difference in output quality is striking. If you want the full framework for writing prompts that work, our guide on the four-part prompt formula covers it step by step.

Research Callout: In our experience, prompts that include context, a clear task, a format, and constraints produce usable results far more consistently than single-sentence requests. The difference is dramatic — well-structured prompts routinely produce outputs you can use with minor edits, while vague prompts almost always need a complete rewrite.

Mistake 2 — Accepting the First Output

This one is subtle because the first output often looks fine. It is grammatically correct, reasonably relevant, and arrives in seconds. So you use it. But "fine" is not the standard — useful, specific, and genuinely good is the standard. And the first output rarely reaches that bar.

The most effective AI users treat the first response as a starting point, not a finished product. They iterate. "This is good, but make the tone more casual." "The second paragraph is too long — condense it to two sentences." "Add a specific example from the retail industry." Each iteration sharpens the output, and most tasks only need two or three rounds to go from generic to genuinely useful.

Think of it like editing a first draft. No professional writer publishes their first draft. No professional AI user should accept the first output either — at least not without reading it critically and asking whether a round of refinement would make it materially better.

A useful rule of thumb: if the output saves you time even after one round of iteration, you are ahead. If it takes four rounds to get something usable, the prompt probably needs rewriting rather than more iteration.

⚡ Quick Tip: After getting your first output, try this single follow-up: "What would make this 20% better?" The AI will often identify weaknesses in its own output and fix them in the next version.

Mistake 3 — Pasting Sensitive Data Without Thinking

In the rush to see what AI can do, it is easy to paste in a client spreadsheet, an internal strategy document, or personal employee information without pausing to consider where that data goes. This is not paranoia — it is a genuine professional responsibility.

The default settings on most AI tools mean that your conversations may be used to train future models. That means confidential business information, personal data, or client details you paste into a chat could theoretically appear in outputs generated for other users. The risk is small but real, and in regulated industries, it can create serious compliance issues.

The practical approach is straightforward. First, check your tool's data settings. ChatGPT allows you to disable training on your conversations in Settings. Claude's commercial API and team plans do not train on your data by default. Gemini's data handling depends on whether you are using a personal or Workspace account. Second, develop a simple habit: before pasting anything, ask yourself "would I be comfortable if this appeared on a public website?" If the answer is no, either anonymise the data first or use an enterprise-tier account with stronger privacy guarantees.

This does not mean you cannot use AI with sensitive work. It means being thoughtful about what you share and with which tier of which tool. Most organisations that use AI effectively have simple guidelines — not lengthy policies, just clear rules about what goes in and what stays out.

🧠 Quick Challenge: Think about the last thing you pasted into an AI tool. Did it contain any names, financial figures, or information a client would not want shared?

Answer: If it did, check your tool's data settings now. On ChatGPT: Settings > Data Controls > disable "Improve the model for everyone." On Claude Team/Enterprise: data is not used for training by default. On Gemini in Workspace: check your admin's settings.