You have probably tried AI at some point. Maybe you asked it to draft an email, got something that read like a corporate press release, deleted it, and wrote the thing yourself. Or you spent twenty minutes constructing the perfect prompt, received a response that was almost right, and spent another twenty minutes editing it — at which point you could have just done the task.

So AI is not delivering. You are not imagining it. If you're feeling a bit disillusioned — like maybe AI just isn't for you — that frustration is valid. And it's almost certainly not about your ability. But there is a specific reason, and it is not the one most people assume.

The Problem Is Not Your Prompts

Most AI advice starts with the tool. It shows you impressive demonstrations — AI writing a business plan in sixty seconds, AI analysing a spreadsheet, AI answering questions in five languages — and then leaves you to work out where any of that fits into your actual Tuesday afternoon.

That gap between "AI is impressive" and "AI is useful to me" is where most professionals get stuck. We hit this exact wall ourselves. We could see the demos, read the case studies — and still couldn't make it click for our own work. The problem wasn't the tool. It was that we hadn't asked the right question yet. The reason is not a lack of prompting skills (though a simple formula does help). It is that nobody helped them identify where in their work AI actually belongs.

Knowing how to write a prompt matters far less than knowing which problem to give it. You could be an excellent driver and still go nowhere if you have not decided on a destination.

This article helps you find your first genuine AI use case. It skips the car manual and starts with the destination.

Three Questions to Find Your First Use Case

You do not need to understand how AI works at a technical level, complete a course, or read about the latest releases. You need to answer three questions about your own work.

What do you repeat?

Look at your week. What do you do more than once that follows roughly the same pattern each time? Weekly status updates. Meeting agendas. Briefing documents. Responses to common client questions. Job description templates. Performance review frameworks.

These tasks are strong candidates because AI is good at producing consistent first drafts quickly. Once you have a prompt that works for one instance of the task, you can reuse it — browse the Prompt Library for ready-made prompts you can try on your first task. The effort you invest the first time pays back every time after that.

Example: A project manager writes five short team updates every Friday. She captures the week's bullet points and asks AI to shape them into her standard update format. What used to take 45 minutes now takes 12. The tone still sounds like her — she always reviews and adjusts — but the structural work is done.

What do you dread?

Think about the tasks that sit on your to-do list for days longer than they should. Not because they are technically difficult, but because starting them takes disproportionate energy. Performance review narratives. Summarising a long document. Writing the opening paragraph of anything.

From what we've seen, AI is often most useful here — not because it eliminates the work, but because it eliminates the blank page. A mediocre first draft that exists is almost always easier to improve than a blank document that does not. Once something is in front of you, the resistance drops sharply.

Example: A freelance consultant writes proposals for new projects. He knows the work, but dreads the executive summary section. He now pastes his discovery notes into AI and asks for a first draft. He edits it heavily — it never goes out unchanged — but the task that used to take two hours now takes forty minutes, and he no longer avoids it.

What takes longer than it should?

These are the tasks where the gap between what you are trying to achieve and the time it actually takes feels unreasonable. Consolidating research from multiple sources. Reformatting a document. Translating technical content into plain language for a non-specialist audience. Writing the same type of thing repeatedly in slightly different forms.

A useful test: if you have ever thought "this should take ten minutes and it is taking an hour," that task is worth a closer look.

Example: An HR manager regularly communicates policy updates to staff. The policies are written in dense, procedural language — accurate, but impenetrable. Rewriting them for a general audience used to take most of a morning. She now pastes the policy text into AI, asks for a plain-English summary, and uses that as her working draft. The same task now takes twenty minutes.

🧠 Quick Challenge: Your colleague says they tried AI to write a client proposal but it "didn't work" — the output was generic and they ended up rewriting everything. Based on what you've read, what's the best advice?

- A) Tell them to take a prompt engineering course to write better prompts

- B) Help them identify one specific, repeatable part of the proposal process where AI could draft a first version

- C) Suggest they switch to a more powerful AI model

Answer: B) The article's core argument is that the problem is rarely prompting skill — it is choosing the wrong task. Rather than trying to get AI to write an entire proposal, your colleague should identify one repeatable element (like the executive summary or standard boilerplate sections) where a first draft saves the most time. As the article puts it, knowing which problem to give AI matters far more than knowing how to write the prompt.

What This Looks Like in Practice

These are not edge cases. They are ordinary tasks that most professionals encounter in some form.

A marketing coordinator who attends three client briefings a week used to spend thirty minutes after each one typing up notes and action points. She now records a voice memo during the meeting, uses AI to transcribe and summarise it, and reviews the output for accuracy. Her briefing notes are consistently more structured than before, and she has reclaimed roughly ninety minutes each week.

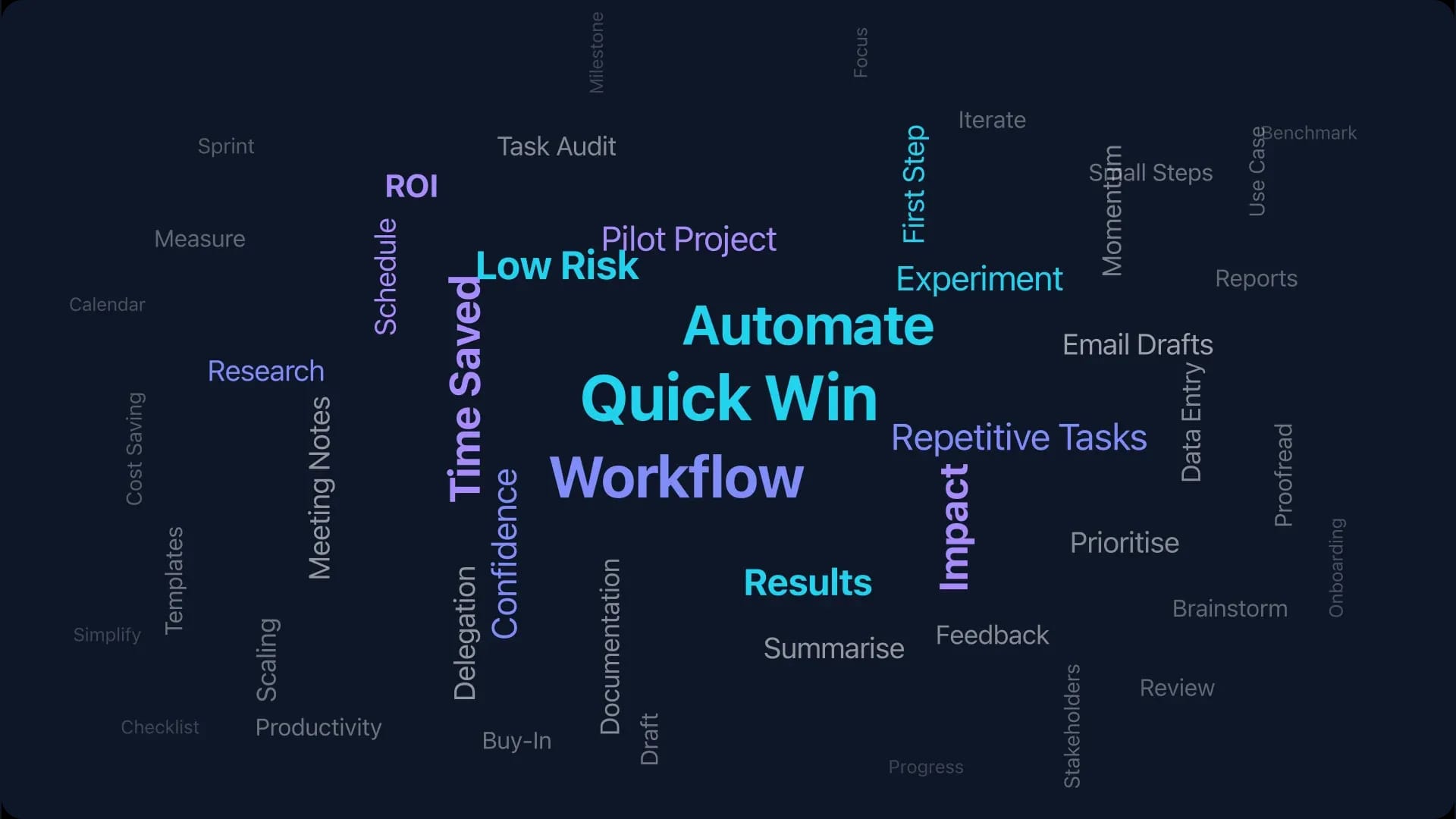

⚖️ Time Saved Across Real Use Cases:

- Weekly team updates — 45 min down to 12 min

- Client proposals — 2 hours down to 40 min

- Policy rewrites — half a day down to 20 min

- Meeting note write-ups — 90 min/week down to 30 min/week

- Customer enquiry replies — 10 min each down to 2 min each

Source: examples from "Three Questions" and "In Practice" sections above

A small business owner who sells handmade furniture receives similar enquiries repeatedly — questions about lead times, custom sizing, materials, and care instructions. He built a small set of AI-drafted responses to common questions. He still personalises every reply, but the drafts mean he can respond thoughtfully in two minutes rather than ten.

Neither person has attended an AI training course. Neither knows what a transformer model is. Both made a practical decision about one specific task and found that it worked.