Your colleague mentions "hallucinations" in a meeting. Your IT department emails about "context windows." A LinkedIn post says you need "prompt engineering skills." You nod along and Google it later.

If that's been you, there's nothing to feel awkward about — these terms are genuinely confusing, and most of them didn't exist in everyday conversation two years ago.

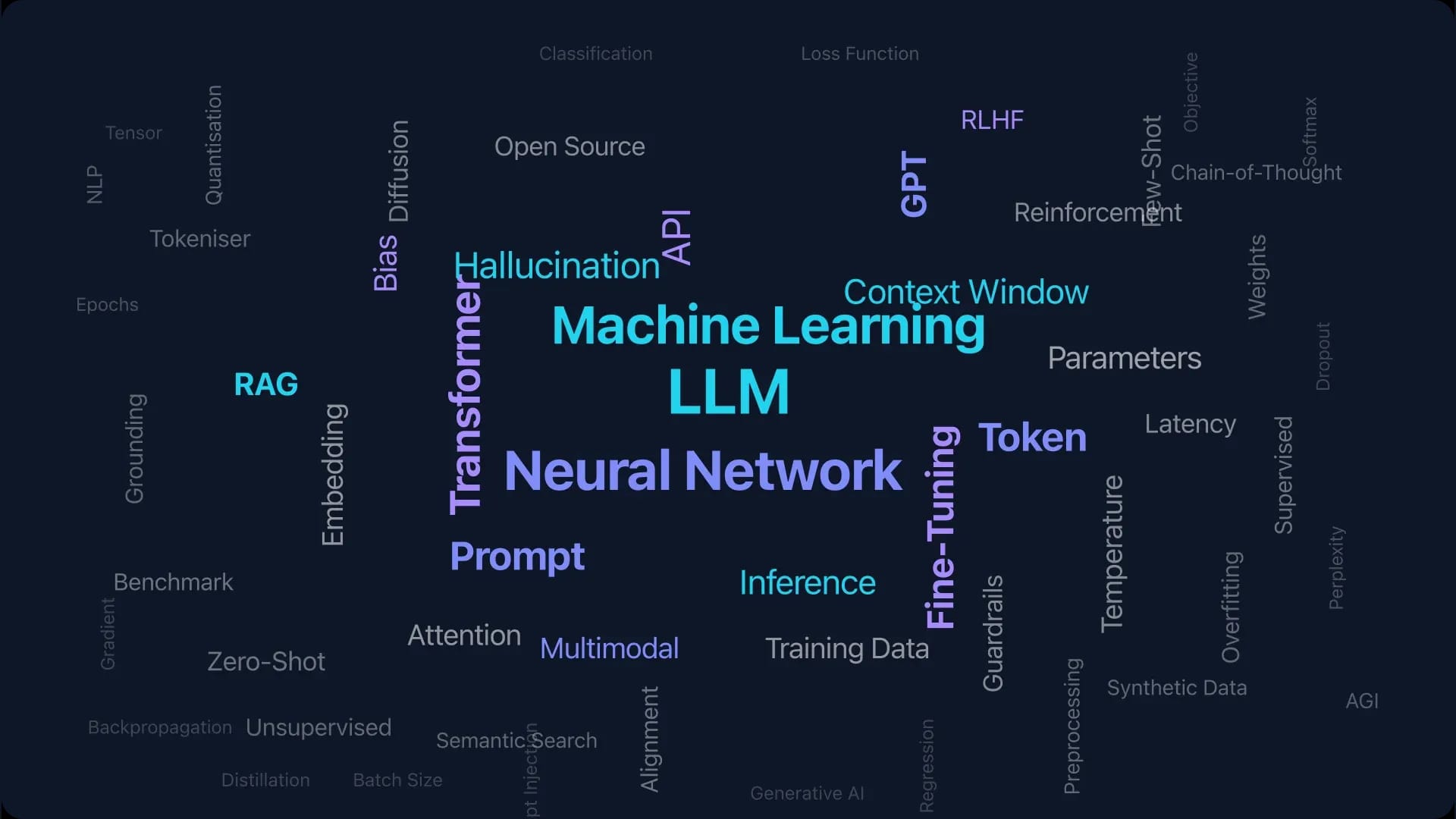

This glossary fixes that. Thirty terms — not 131 — chosen because they're the ones that come up when professionals use AI tools at work. Each definition is short enough to read between meetings and specific enough to use in conversation with your team.

We've grouped them by when you'll need them: getting started, writing better prompts, understanding what goes wrong, and knowing what your IT department is talking about.

Getting Started: The Basics

Artificial Intelligence (AI)

Software that performs tasks normally requiring human judgement — recognising patterns, generating text, making predictions. The AI tools you'll use at work (ChatGPT, Claude, Gemini) are a narrow type called generative AI. They generate new text, images, or code based on patterns learned from training data. They do not think, understand, or have opinions.

Large Language Model (LLM)

The technology behind ChatGPT, Claude, and Gemini. An LLM is a neural network trained on vast amounts of text to predict what word comes next. That prediction ability, scaled up, produces surprisingly coherent writing, analysis, and code. That's the core idea. Key point: LLMs generate statistically probable text — they do not retrieve facts from a database. (For a deeper explanation, see How Large Language Models Work.)

Generative AI

AI that creates new content — text, images, code, audio — rather than just classifying or sorting existing data. When you ask ChatGPT to draft an email, it generates that email word by word. When Midjourney creates an image, it generates pixels based on your description. The output is new each time, even for the same prompt.

Machine Learning

The broader field that LLMs belong to. Machine learning is software that improves through exposure to data rather than being explicitly programmed with rules. Your email spam filter uses machine learning — it learned what spam looks like from millions of examples, and it gets better as you mark emails as spam or not spam.

Neural Network

The architecture behind modern AI. Inspired by the human brain, a neural network is layers of mathematical functions that process data. Information flows through these layers, getting refined at each step. You don't need to understand the maths — just know that when someone says "the model," they mean a neural network that has been trained on specific data for a specific purpose.

Training Data

The information an AI model learned from. GPT-4 was trained on text from the internet, books, and other sources. Training data matters because it determines what the model knows and what biases it carries. If the training data contains mostly American English, the model defaults to American spellings and cultural references. If it contains outdated information, the model will confidently repeat outdated facts.

Writing Better Prompts

Prompt

The instruction you give an AI tool. "Write me a summary of this report" is a prompt. The quality of your output depends almost entirely on the quality of your prompt — vague instructions produce vague results. A good prompt includes context (who you are, what you're working on), the specific task, the format you want, and any constraints.

Prompt Engineering

The skill of writing prompts that consistently produce useful output. In our experience, it's not a science — it's more like learning to give clear briefs to a capable but literal-minded colleague. The core techniques: provide context, specify the output format, give examples of what good looks like, and break complex tasks into steps. (See our practical guide to the 4-part prompt formula for a simple structure that works, or browse the Prompt Library for ready-to-use examples.)

Context Window

The amount of text an AI model can process in a single conversation. Measured in tokens (roughly ¾ of a word). Claude's context window is 200,000 tokens — enough for an entire book. ChatGPT's varies by model. The practical impact: if your conversation or uploaded document exceeds the context window, the model loses access to earlier parts. If you've ever had an AI seem to "forget" what you told it earlier in a long conversation, this is probably why — and it catches everyone off guard the first time. For long documents, this means the model may miss information from page 3 while answering about page 47.

Token

The unit AI models use to process text. One token is roughly ¾ of a word in English. "Artificial intelligence" is 2 words but 4 tokens. Tokens matter for two reasons: they determine how much text fits in the context window, and they determine what you pay on API-priced tools. When a pricing page says "1M input tokens," that's roughly 750,000 words.

System Message

A hidden instruction that shapes how an AI model behaves throughout a conversation. When you use ChatGPT through a company integration, there's likely a system message telling it "You are a customer service assistant for [Company]. Never discuss competitor products." You can set your own system messages in most tools to create consistent behaviour — like telling Claude to always respond in UK English and use formal tone.

Temperature

A setting that controls how predictable or creative the AI's output is. Temperature 0 means the model always picks the most likely next word — producing consistent but potentially boring text. Temperature 1 means more randomness — more creative but more likely to go off-track. For business writing, keep it low (0-0.3). For brainstorming, turn it up (0.7-1.0). Most tools set a default so you never have to adjust it.